Your working in a successful company that has grown organically for years. The team has navigated the funding headaches, built a great product, and has customers that love your product. A new employee is joining this rocket-ship and turns and asks; how does this feature actually work? The answer can be straightforward. Often it can be a context treasure hunt, piecing together the clues from the memories of the giants on whose shoulders you now stand. Less grandiosely it’s a video huddle interrupting key-minds and context switching them to develop a new context for your particular question. Let’s hope the one person who built it, is not busy, on vacation, or gone? As we gather the team to reverse-engineer the answer, we’re relieved we have an answer to our pertinent question. As the answer disappears into the collective ether, we think surely there is a better way?

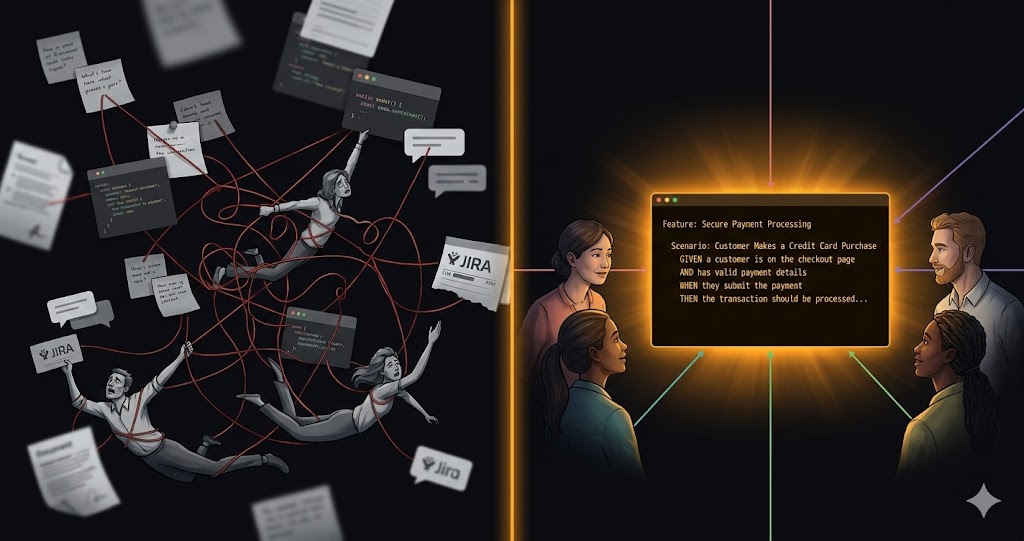

The header image is a deliberately click-baity illustration of this. True teams are neither truly in a state of dystopian distress nor utopian perfection. Teams are made of dedicated people who excel in their relative domains. Losing the context can be a natural consequence of growth, every team handling the logic in their own way. Engineering writes integration tests. Product maintains PRDs. Customer Support builds a knowledge base. Customer Success develops their own playbooks. Each team solves the problem for their own context, with their own tools, in their own language. This touches all Four Signals. People lose context as they rotate between teams or leave. Process has no feedback loop connecting intent to implementation to customer experience. Architecture buries rules in conditional logic with no contracts or boundaries. Measure can't tell you if the system does what you think it does because "what you think it does" was never made explicit.

The business logic isn't missing. It's everywhere. The logic exists across a dozen surfaces, maintained by different teams, in different formats, with nothing connecting the dots. In the age of AI, there has to be a better way to bring this together. This article explores what that looks like: the case for managing business logic as a shared, cross-functional asset, illustrated with five use cases.

BDD: Not a Testing Framework, a Business Logic Strategy

Behavior-Driven Development has been around for years, and it has a reputation problem. Most teams associate it with Cucumber, Gherkin syntax, and QA automation. Fair enough. That's how it's been used in a lot of organizations.

"BDD often got shunted into a siloed QA activity, with test engineers translating requirements into scenarios that nobody else read." — Momentic (1)

But the core idea is more powerful than its typical implementation. BDD at its best is a way to express business rules in a format that is simultaneously human-readable and machine-executable. A Gherkin scenario like this:

Given Sarah Chen has a premium account

When she purchases items worth $150

Then she should receive a 10% discountThat's not a test. It's a business rule that Product can validate, Engineering can implement, Support can reference, and QA can automate. One artifact, four audiences, always current, because it runs against the code on every deploy.

The conversation that produces these scenarios matters more than the syntax. The Three Amigos pattern, getting Product, Engineering, and QA or Support in a room before writing code, is where misalignment surfaces early. Product says "here's the intent." Engineering says "here's what's feasible." Support says "here's what customers will ask about." You write the scenarios together. Misunderstandings die in that room instead of becoming production bugs three months later.

Organize scenarios by business capability, not by sprint or technical architecture. A folder called pricing_rules/ or customer_onboarding/ beats sprint_47_tests/ every time. Features should represent stable business capabilities that persist across releases, not ephemeral user stories that expire after a sprint (2). When someone in support needs to understand how onboarding works, they should go to the onboarding/ folder and read English, not grep through 200 test files.

Five Use Cases

1. Cross-Functional Requirements Alignment

The most immediate win. Before a single line of code is written, Product, Engineering, and Support sit down and write Gherkin scenarios together. This is the Three Amigos pattern in action, and it solves the most expensive problem in software: building the wrong thing.

The scenarios become the acceptance criteria. Not a JIRA ticket with a vague description, not a PRD that's open to interpretation. Concrete, testable examples of how the system should behave. When an engineer asks "what happens if the user does X?", the answer is in the scenario. When a support agent asks "is this behavior intentional?", the answer is in the scenario. When Product asks "did we ship what we intended?", the CI pipeline answers that question on every commit.

Teams practicing this report spending less time in back-and-forth clarification cycles, because the upfront conversation surfaces ambiguity before it becomes rework. In one well-documented case, Simon Powers introduced BDD across multiple product teams at a global investment bank. Developer time spent on defects dropped from 35% to 4% over nine months. Not from better testing tools, but from better shared understanding through collaborative scenario creation with strong product owner involvement (3).

2. Living Knowledge Base for Customer Support

Here's where things get interesting for non-engineering teams. If your BDD scenarios are organized by business capability and written in plain language, your support team can use them directly as a reference.

Instead of maintaining a separate knowledge base that drifts from reality the moment a feature changes, support can read the scenario files to understand current system behavior. The scenarios are verified against code on every deploy. If the behavior changes, the scenario changes. If the scenario passes, the behavior is correct. No more "I think it works like this." It either matches the scenario or it doesn't.

This works best when the folder structure mirrors business domains rather than technical ones. customer_onboarding/, billing_rules/, permissions/. Support agents can navigate these intuitively. Some teams add readme.md files in each folder providing additional business context, linking to external requirements, and explaining the domain's significance. Multiple entry points for different audiences, one underlying truth.

3. AI-Powered Business Logic Queries

This is the use case that changes the game. Once your business logic is expressed in structured, consistent, executable Gherkin scenarios, you've created something AI can actually reason about.

Train an AI agent on your scenario repository and it becomes your institutional memory. When a support engineer asks "how does the discount tier work for enterprise customers?", the AI doesn't hallucinate; it references verified, executable scenarios. The answer is accurate because the scenarios run against the real system on every deploy.

The structured Given-When-Then format turns out to be ideal training data for large language models, far more parseable than wiki pages or scattered docs. This makes sense when you think about it: Gherkin scenarios are consistent in structure, precise in language, and tied to verified behavior. That's exactly the kind of clean, structured input that produces reliable AI output.

This also works internally. New engineers onboarding to a codebase can ask the AI "how does payment processing work?" and get an answer grounded in executable specifications, not outdated wiki pages. The AI serves as a guide to the living documentation, making the whole system more accessible.

4. AI-Accelerated Scenario Authoring

The Three Amigos conversation is the heart of BDD, but preparing for that conversation used to be a blank-page problem. AI changes the preparation step significantly.

Feed a messy PRD or a feature brief into an AI and get first-draft Gherkin scenarios back. These aren't production-ready; they're conversation starters. The AI can extract business rules from natural language, suggest edge cases you haven't considered, and structure the output in consistent Given-When-Then format. You walk into the Three Amigos meeting with draft scenarios to edit and debate rather than starting from zero.

A word of caution: Richard Lawrence at Humanizing Work found that Copilot's auto-suggested Gherkin scenarios missed the point entirely.

"Pure slop. Technically passable as tests but missing the point of human-readable specifications." — Richard Lawrence, Humanizing Work (4)

The fix wasn't abandoning AI but giving it better instructions. He developed a set of LLM-friendly guidelines for reviewing and rewriting Gherkin, and found that Claude was "actually pretty good" at improving scenario quality when given clear criteria. The lesson: AI-generated scenarios need curation, not blind trust.

Tools like SpiraPlan by Inflectra are pushing this further, automatically generating BDD scenarios from user stories and then creating step definitions, achieving high levels of automation in the test creation pipeline (5). The human role shifts from writing scenarios to curating them, which is a better use of everyone's time.

5. BDD as the Verification Layer for AI-Generated Code

This might be the most forward-looking use case, and it's already happening. As AI coding agents become more capable (Claude Code, GitHub Copilot, Cursor), teams need a way to verify that AI-generated implementations actually match business intent. BDD scenarios are that verification layer.

Ankit Jain described this pattern recently in Latent Space: humans define what the system should do through BDD scenarios, AI agents write the implementation, and the BDD framework verifies the output.

"Humans doing what humans are good at: defining what 'correct' means, encoding business logic and edge cases, thinking about what could go wrong. The agent handles the translation from intent to code." — Ankit Jain, Latent Space (6)

This flips the traditional role of BDD. Instead of "scenarios that test human-written code," it becomes "scenarios that define the contract for AI-generated code." The scenarios are the specification, the acceptance criteria, and the safety net all in one. As AI-generated code becomes more prevalent, having a human-readable, executable specification of business intent becomes not just nice to have. It becomes essential.

Getting Started Without Boiling the Ocean

If any of these use cases resonated, the temptation is to go big. Don't. The teams that succeed with this start small and prove value before scaling.

Pick your biggest pain point. What business rule generates the most support tickets? What feature breaks most often in regression? Where does onboarding stall because new engineers can't figure out how something works? Start there.

Scope it tight: one team, one business domain, three to four months. Run Three Amigos sessions before every feature in that domain. Commit Gherkin scenarios alongside the code. Measure what changes: reduction in "how does this work?" interruptions, time to onboard new engineers, support ticket volume for that domain.

The tooling is secondary to the practice. SpecFlow for .NET teams, Behave for Python, Karate for API-first architectures, Cucumber for most others (7). Pick what fits your stack and move on. The tool matters far less than the habit of writing scenarios collaboratively before writing code.

Don't try to retroactively document years of business logic. That's a trap. Instead, adopt the practice going forward and let coverage grow organically. Every new feature gets scenarios. Every bug fix gets a scenario that would have caught it. Over time, your most important business logic becomes documented, tested, and accessible. Not because someone mandated a documentation sprint, but because it's a natural byproduct of how you build software.

What is your process?

Usually your business logic isn't missing, it's everywhere.

It's in your tests. It's in your knowledge base. It's in your PRDs. It's in your Slack threads, your JIRA comments, your support tickets, and the heads of the people who've been here the longest. Every team has their fragment. Every fragment has value. The same representations of the same business rules, none of them provably correct, none of them in sync.

That's the bug. Not the absence of documentation, but the absence of connection.

BDD, done right, gives you that connection. A single source of truth that Product can read, Engineering can execute, Support can reference, and AI can learn from. One place where "how does this actually work?" has a verifiable answer.

Every startup has business logic. The question is whether yours is a system or a scavenger hunt.

How are you documenting your business logic and how is it spanning the Four Signals?

References

- Momentic, "How AI Breathes New Life Into BDD," August 2025. https://momentic.ai/blog/behavior-driven-development

- monday.com, "Behavior-driven development (BDD): an essential guide for 2026," November 2025. https://monday.com/blog/rnd/behavior-driven-development/

- Powers, S., "A case study for BDD in improving throughput and collaboration," Cucumber Blog / Adventures with Agile, April 2017. https://cucumber.io/blog/bdd/improving-throughput-and-collaboration/

- Lawrence, R., "AI for better BDD," Humanizing Work, December 2025. https://www.humanizingwork.com/ai-for-better-bdd/

- Inflectra / EuroSTAR Conference, "Operationalizing BDD Scenarios Through Generative AI," May 2024. https://conference.eurostarsoftwaretesting.com/operationalizing-bdd-scenarios-through-generative-ai/

- Jain, A., "How to Kill the Code Review," Latent Space, March 2026. https://www.latent.space/p/reviews-dead

- 303 Software, "BDD and Cucumber Reality Check 2025," September 2025. https://www.303software.com/insights/behavior-driven-development-cucumber-testing-2025-reality