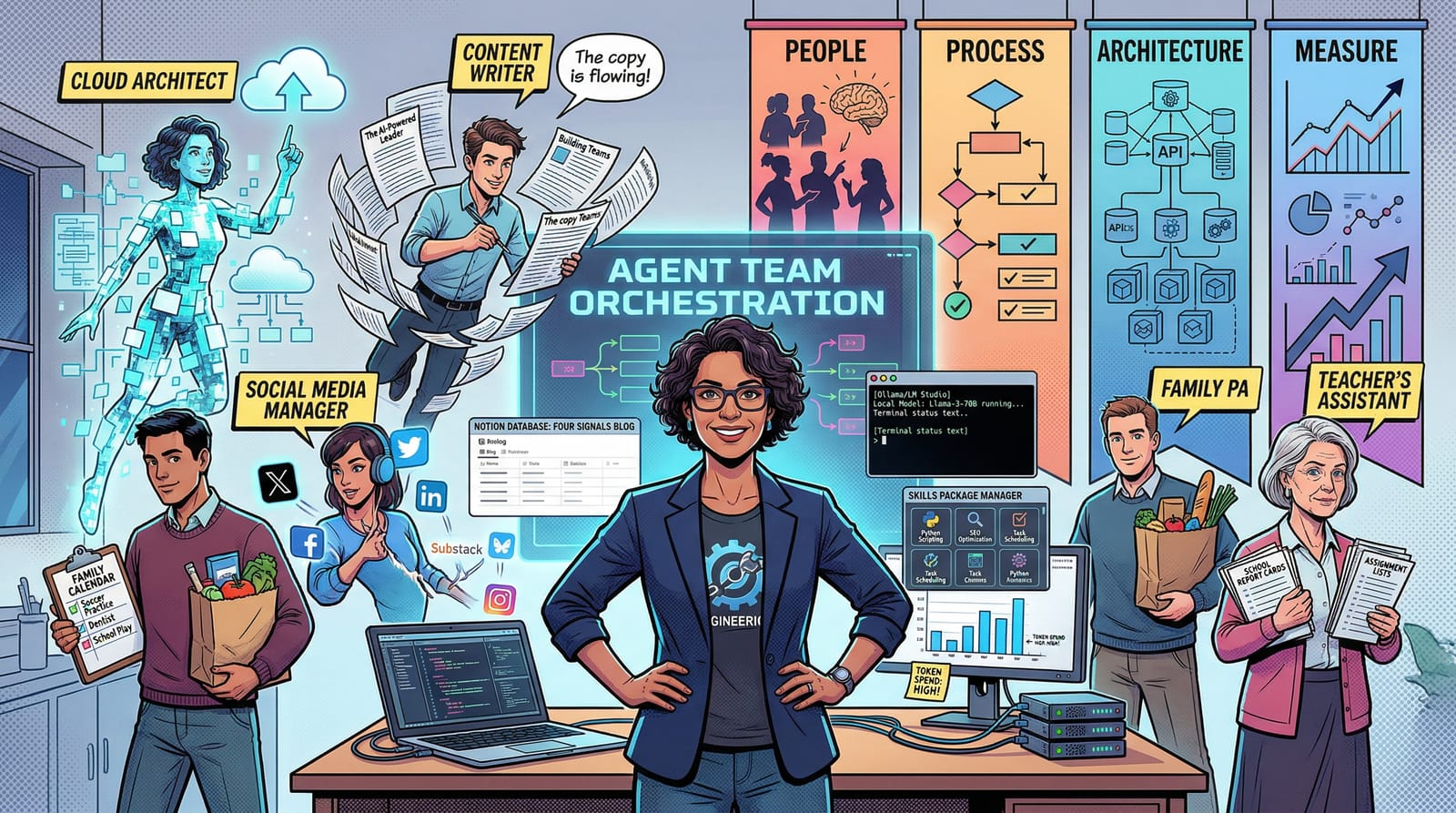

In March I transitioned out of my role as CTO of Stratus (stratus.build). After leading the stellar team of 30+ engineers through AI modernization efforts it was time to take a deeper dive into the current state of the AI toolchain. Doom scrolling my way through the latest and greatest is one thing, but how does this play out in practice? Here's what a month of hands-on work revealed.

The Blog Redesign

Cloud Architect

The first testbed was my blog, foursignals.dev. I rebuilt it from the ground up with a Notion-backed content management system. A Notion database serves as the single source of truth for all blog content, pulling pages at build time and caching images locally. The blog auto-deploys on content changes. The agent handled the infrastructure decisions, dependency selection, build pipeline configuration, and deployment setup. Prompting for architecture felt like briefing a staff engineer: describe the constraints, state the requirements, let them propose the solution.

Content Writer & Publisher

I developed a semi-manual automation flow for writing articles. I would start the research in tools like Notebook LLM and Gemini Deep Research to investigate topics of interest. From that I would develop high-level story-arcs to iterate on ideas. I also built a voice and editorial skill that enforces my writing style across all content. It catches AI-generated phrasing, removes filler, applies tone rules, and checks story arc coherence. Think of it as a brand editor that runs as a final pass on everything before it goes out. With this skill I took those arcs and generated a draft article. As we headed to final publish I would read the article and then using my voice tell AI what changes I wanted. Then a final pass to make it authentic, here I actually used the keyboard 🙂. The semi-autonomous iteration patterns proved most useful here, where I could trust the output is authentic and unique enough using AI to reduce the chore part of creating articles of interest to me. There is possibly more automation to do here but it’s a delicate balance between a good story and AI slop.

Brand Design and Voice

The brand system, Four Signals, is built around four pillars I use for engineering leadership: People, Process, Architecture, and Measure. AI entered the picture in the design workflow itself with the redesign. I came across a TikTok by Jens Heitmann showing a complete website redesign workflow using Google Stitch, MCP servers, and a design skill called UI/UX Pro Max. The creator coded nothing. The output looked production-grade. That was the catalyst. I ran the same workflow against foursignals.dev: described the site in terms of brand intent in Google Stitch, exported a machine-readable design system (colours, type scales, spacing rules, component behaviour), then wired it into my coding agent via MCP. The agent pulled the design tokens, cross-referenced them against a database of 57 UI styles and 95 colour palettes, generated the components, and assembled the pages. The whole process compressed what would have been a week of design-to-code into an afternoon. No Figma opened, no manual CSS decisions, no browser-loop iteration. I wrote about the full workflow in App Design at the Speed of AI.

I also used a marketing analysis skill and the brand voice skill to stress-test what the website was actually communicating. Is it clear this is a consultancy, or does it read like a personal blog? Is the messaging aimed at the right audience? Does the copy convey authority or just enthusiasm? The skills ran what felt like an interview, interrogating every section of the homepage, pulling out what the site was saying versus what I intended it to say. Some of the gaps were surprising. The iterative back-and-forth between the marketing analysis and my own intent really honed the copy on the homepage into something that accurately represents what Four Signals is and who it's for. Discover Brand Skill

Social Media Manager

The goal was to take a single long-form article and automatically produce derivative content: LinkedIn posts, tweet threads, scheduling metadata, all flowing from the same Notion source of truth. All posts are now automated from a content calendar, going out to Twitter, Bluesky, Substack, and LinkedIn. I orchestrated the posting through OpenClaw, which handled cross-platform distribution. I wrote about building this system in Meet Lionel - The OpenClaw Social Media Manager. One interesting wrinkle: Substack doesn't have a public API, so I got OpenClaw to write its own Chrome extension to work around the limitation and make posting easier. This worked brilliantly right up until it didn't. An agent misconfiguration led to a snafu where the same content got pushed out multiple times, flooding my feeds with duplicate posts. The kind of mistake that's both embarrassing and instructive: autonomous agents are powerful, but without proper guardrails and idempotency checks, they'll happily repeat themselves until someone notices. Lesson learned, safeguards added.

Personalised Agentic News

I built an experimental agentic news feed, a system that monitors sources across my areas of interest, summarises relevant content, and surfaces it for review. The idea is to replace doom-scrolling with a curated, AI-driven briefing. Rather than passively consuming whatever the algorithms serve up, the agent actively hunts for content that matches my professional interests and filters out the noise. Still rough, but the bones are there: agents doing the reading, humans doing the curation. The goal is a daily digest that keeps me informed without the time sink of manually trawling feeds. I explored this further in Claude Code vs Kimi Code: Two Brilliant Agents, One Human Still Required, where I tested a multi-agent pipeline to collect and score articles based on user-defined personas.

Family Life

Family PA

I've got an OpenClaw agent sending a daily summary email to my wife and me with all the calendar events, to-do items, and upcoming birthdays. We can both reply to it and add things to do, so we get a shared sense of what's important and what needs doing. I can also interact with it on the go from my mobile using Telegram. It's a small thing, but having a shared daily briefing that both of us contribute to has genuinely improved how we coordinate family logistics.

Teacher's Assistant

One of the more satisfying projects was building an automation layer on top of Seattle Public Schools' Schoology/PowerSchool parent portal. The parent dashboard is, to put it diplomatically, not fit for purpose. Empty dashboards, no working email notifications, no calendar sync, and no useful API. OpenClaw reverse-engineered the internal endpoints, mapped out the authentication flow, and built an agent specification that iterates over all four of my children's accounts, pulling overdue assignments, upcoming work, recently graded items, and current grades by course. The goal: a weekly Friday email digest that actually tells us what's going on, replacing the broken UI we'd been clicking through trying to piece together who's missing what. The agent prompt ended up being a detailed technical specification document, not a few lines of instructions.

Board Game Designer

Because apparently a month off isn't enough, I also modified a Splendor++ mod for Tabletop Simulator. Forked the mod by extracting the save file, downloading all hosted image assets, and writing an agent prompt to replace the Noble cards with photos of my dad. I created pirate-themed reference cards for the board game "Plunder: A Pirate's Life," iterating through multiple design passes to get the layout right. The agent handled the research into print dimensions, rules summarisation, and layout validation across each iteration.

Agentic Engineering

OpenClaw and Hermes Agents

A lot of the work described above was handled via OpenClaw running locally, and more recently I've been experimenting with the Hermes agent as well. OpenClaw handled the social media orchestration, task routing, and multi-step workflows. Hermes brought a more conversational, less structured interaction model that suits exploratory work. The pattern across both is consistent: the agent handles research and implementation guidance; I handle the judgment calls and context. The real skill isn't prompting, it's knowing when to intervene and when to let the agent run.

Local Models with Ollama and LM Studio

I've been experimenting with Ollama and LM Studio for running local models. Google's Gemma 4 is surprisingly fast and useful on my local Mac Studio. The goal is to have local AI infrastructure that agents can call into: generate an image, analyse a screenshot, process a document, all without hitting external APIs or burning tokens. Local models change the economics of agent work. When you're not paying per token, you can afford to let agents iterate more freely, run more experiments, and fail cheaply.

Cloud Models: Anthropic, Kimi Code, and OpenRouter

The cloud side of the stack is where the heavy lifting happens. Claude handles the complex reasoning, architecture decisions, and long-context work. Kimi Code has been interesting for certain coding tasks, and I compared the two directly in Claude Code vs Kimi Code. OpenRouter provides the flexibility to route between models depending on the task, balancing cost against capability. The multi-model approach isn't just about finding the best model, it's about matching the right model to the right job. Not every task needs the most expensive option.

Agent Package Management and Skills

This is where the biggest gap exists. All the knowledge living in mode files: skills, prompts, tools, personas, interoperability configs, plugins, all of it needs to be installable, shareable, and portable across different agents. Right now, every time I switch between agents, I'm manually copying context, rewriting prompts, adapting configurations. There's no package manager for agent skills. What I want to see is a solid package manager and editor that hooks into running agents, monitors their sessions, has a task board, and lets you browse, search, and share skills that move between agents. The agents are capable enough. The management layer around them is what's missing. For a deeper look at the terminology and frameworks shaping this space, I wrote AI Agents: Cut Through the Jargon.

The Future

The real insight from this month is that the amount of actual code writing is minimal now. Minimal. I went from spending my days in an IDE to spending them orchestrating agents and watching them execute. The bottleneck isn't writing code. It's designing and monitoring agents, checking token spend, verifying output quality, and deciding what to do next. The IDE is becoming a sidecar. The main event is the orchestration layer. As I wrote in AI Doesn't Fix Your Org. It Amplifies It., this tooling amplifies whatever is already there, strengths and weaknesses alike.

I've got an ambitious goal: a Jarvis-style agent team running within the next month. Not just task automation, but agent swarms that can take an idea or a plan and build a whole product from start to finish. Define the product, break it into work streams, assign agents to each stream, monitor progress, review output, ship. My main constraint is token spend, which is a strange new variable to optimise around. This whole experience has been pure ADHD joy, the hyperfocus sweet spot where every rabbit hole connects to the next.

Finally, the job hunt begins. I'm looking to connect with SME owners who have strong business ideas but need technology to set them apart, or startups that need an engineering leader who's been in the trenches with both legacy systems and the agentic future.

Let's talk. Get in touch →